Joseph Cole, Ph.D. — Co-founder & CEO, Rose City Robotics

Joseph Cole, Ph.D.

Co-founder & CEO, Rose City Robotics

A Ph.D. applied physicist and algorithm developer with over 20 years of deep technical experience spanning machine learning, image and signal processing, embedded systems, and high-performance computing. Joseph has designed algorithms for semiconductor inspection at Applied Materials, built cardiac ultrasound imaging pipelines on Nvidia Jetson hardware at YorLabs, modeled nanophotonics at Rice University, and processed 3D seismic datasets on supercomputing clusters at CGGVeritas — and now leads the technical vision at Rose City Robotics, building high-fidelity synthetic data pipelines for training physical AI systems.

Information Pursuit

Active sensing research with the Maseeh Department of Mathematics and Statistics at Portland State University.

A lot of the newest "pixel-to-action" robotics demos look magical until you ask the uncomfortable question: how much data did it take? In one recent interview, an algorithm developer reported using over 300 hours of teleoperation data to teach a robot a complex task a human can do in nine seconds.

That gap is the focus of Joseph Cole's research with professors at PSU's Maseeh College: instead of trying to cover every edge case with more demonstrations, we want robots that can reduce uncertainty on purpose — by moving their sensor to gather the most informative next observation, and by showing their work as they do it.

The technical name for that is Information Pursuit (IP). The core design choice is to treat perception as a Bayesian inference loop rather than a one-shot prediction. The robot maintains a belief over a target state, then chooses the next sensor viewpoint to shrink that uncertainty as efficiently as possible.

What makes this version of IP different: it runs in an embedding space, making the query an embodied action — move the camera — and making the observation a point in a learned latent space rather than a raw pixel array. The explanation is built into the mechanism: the robot's belief state is explicit, the reason for the next action is explicit, and the sequence of queries is an audit trail a human can review.

Research conducted with the Maseeh Department of Mathematics and Statistics, Portland State University. Read the full article →

What this means for production

- —Faster commissioning. Turn 300 hours of training data into closer to 30, and you change the economics of automation.

- —Debuggability on the floor. Posterior uncertainty, MI scores, a sequence of viewpoints — a station you can maintain, not babysit.

- —Robustness to variation. IP is built around explicitly managing uncertainty — the robot responds to ambiguity by gathering a better view, not guessing.

- —Software-first automation. Versionable logic, measurable performance, controlled deployment — with an audit trail that doesn't require hand-tuned rules.

Where IP applies

- Bin picking with occlusions

- Inspection steps where a single view is unreliable

- Rework and repair stations with variable part presentation

- Assembly tasks where "is it seated?" is ambiguous without the right viewpoint

- Any station where cycle time variance is driven by unstructured "looking again"

IP turns that "looking again" into a principled algorithm: look again only when the math says it will buy you certainty.

Where it started

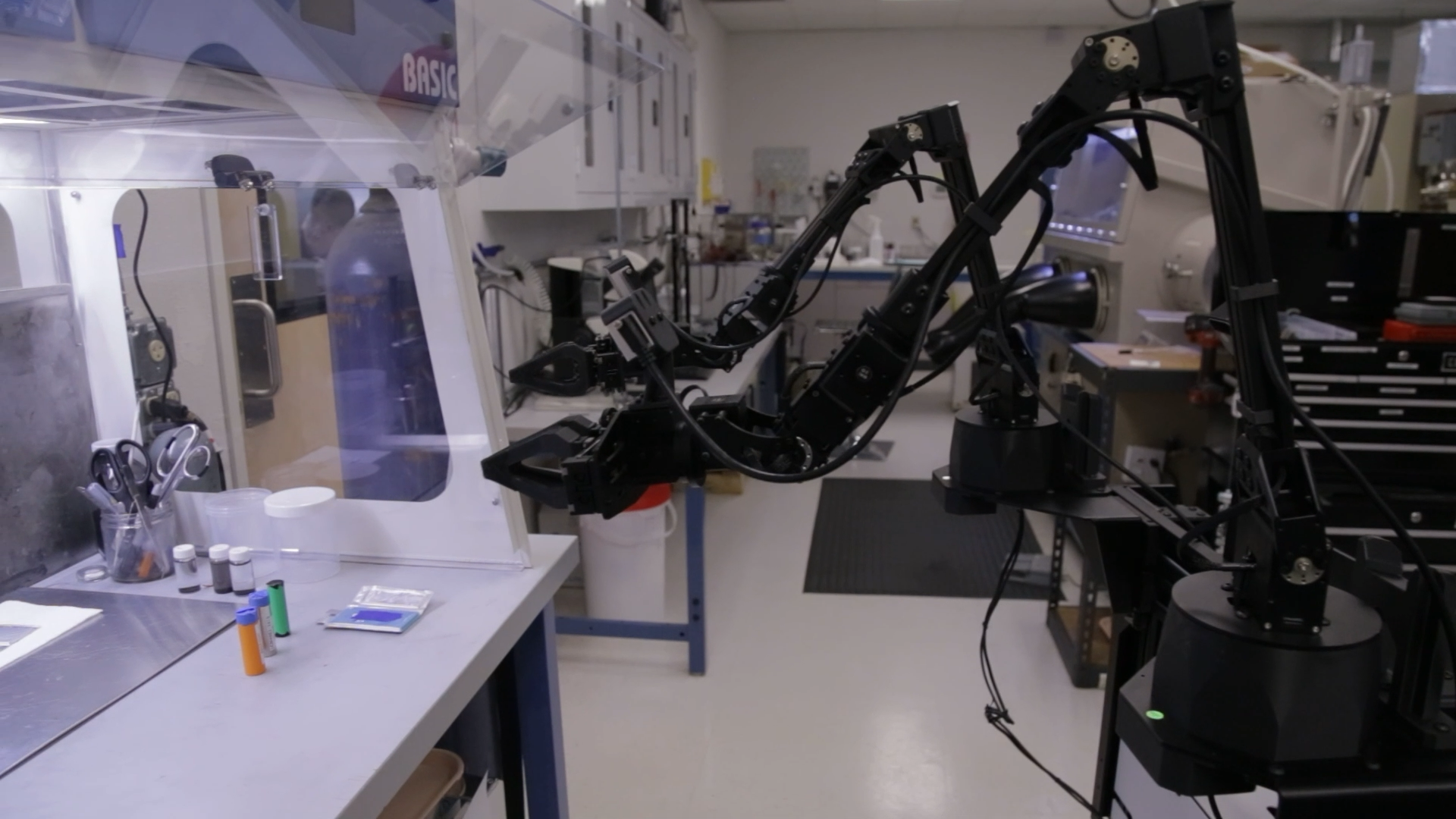

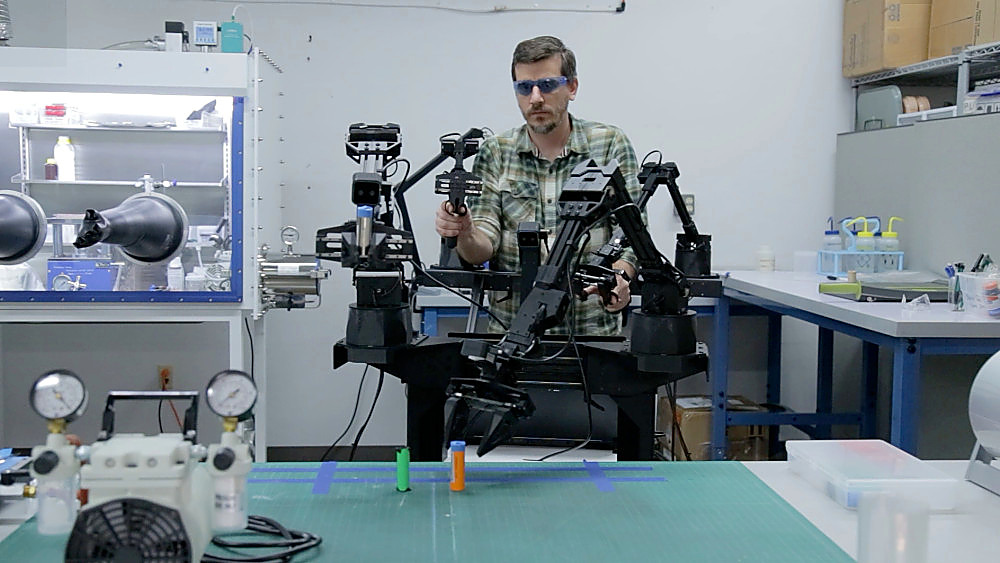

The product grew out of battery-cell inspection research — working in gloveboxes, building teleop rigs, and discovering that the hardest part of automation isn't the robot. It's the perception.

Technical Expertise

- Image & Signal Processing

- Beamforming, doppler modes, seismic noise removal on supercomputing clusters, sub-micron wafer defect detection — from defense-grade satellite imaging at Northrop Grumman to cardiac ultrasound pipelines at YorLabs

- Machine Learning & Deep Learning

- PyTorch, YOLO, vision transformers, and edge-deployed ML models — grounded in a Ph.D. in Applied Physics and a Graduate Certificate in Applied Statistics from Portland State University

- Algorithm Development

- Matlab and Python — production algorithms deployed in Intel fabs (Applied Materials), National Missile Defense systems (Northrop Grumman), and real-time medical imaging (YorLabs)

- Embedded & Real-Time Systems

- Software architecture on Nvidia Jetson ARM SoM; FPGA integration; defined frame rate and pipeline latency requirements for production cardiac ultrasound hardware

- Applied Physics & Optics

- Ph.D. in Applied Physics (Rice University); nanophotonics, FEM modeling with COMSOL, UV-vis, Raman, SEM, AFM — first-principles physical reasoning applied to every system he builds

- Physics Engines & NVIDIA Tooling

- NVIDIA Omniverse, Isaac Sim, and Cosmos for high-fidelity synthetic data generation and digital twin development — the foundation of RCR's physical AI training pipeline

Experience

-

Rose City Robotics

Co-founder & Chief Executive Officer

February 2024 – Present

- Co-founded RCR to apply 20 years of computer vision and algorithm development to physical AI and industrial inspection

- Architecting CAD-driven machine vision systems and synthetic data pipelines for physical AI training; owning technical strategy through customer deployment

-

YorLabs

Staff Engineer – Ultrasound

March 2021 – January 2024

- Lead developer responsible for software architecture of a new cardiac ultrasound machine based on the Nvidia Jetson ARM system-on-module

- Developed beamforming and image processing algorithms to implement B-mode, color doppler, and pulse wave doppler ultrasound modes

- Performed system integration of work products from experts on image quality, front-end UI, and FPGA/hardware

- Defined user requirements including frame rate and pipeline latency; ensured system architecture could perform as expected

- Demonstrated system performance in lab and during two animal trials, leading to successful Series B and C investment round closes

-

Koioc Incorporated

Co-founder & Chief Technology Officer

July 2019 – March 2021

- Built eDiscovery tools for small law firms and solo practitioners — document search as easy as internet search

-

United States Army Reserve

Major (Retired)

1997 – September 2019 (22 years)

- Direct supervisor responsible for health, welfare, and morale of twelve soldiers

- Provided oversight and coordination of all communications and IT needs of an Expeditionary Brigade (~400 soldiers)

- Subject matter expert on radio, satellite, and network infrastructure

- Maintained accountability for $100,000+ of automation and signal equipment (HF/VHF radios, GPS, encryption devices)

- Previously served as Adjunct Professor of Military Science at University of Portland

- Provided logistical support for the mobilization of 3,000+ soldiers for Operations Enduring Freedom and Iraqi Freedom

-

Applied Materials

Senior Algorithm Developer

March 2012 – February 2017

- Designed algorithms in Matlab for the UVision and SEMVision platforms — microscopes for wafer inspection and defect review deployed in Intel fabs and other semiconductor manufacturing facilities

- Analyzed algorithmic gaps at customer site to focus R&D efforts, earning Employee of the Quarter (Q3 2012)

- Designed edge segmentation algorithm for Discrete Measurement Server product line

- Designed image enhancement backlight algorithm on a short loop to secure $6M in SEMVision tool sales

-

CGGVeritas

Staff Seismic Imager

October 2009 – July 2011

- Employed Harpertown, Nehalem, and Westmere supercomputing clusters to generate 3D subsurface images

- Applied wave propagation theory and signal processing techniques to remove noise from seismic data

- Managed multi-terabyte seismic datasets as project disk manager

- Delivered weekly presentations and authored technical reports for customer meetings

- Edited technical articles for publication in Geophysics and The Leading Edge

-

Automated Creation Technologies

Partner

2007 – 2008

- Founded company to manufacture open source 3D printers based on a freeform fabrication concept

- Finalist at U.T. Tyler's New Venture Business Plan competition

- Produced $15,000 in revenues in the first year

-

Rice University

Graduate Research Assistant

2002 – 2008

- Tool owner of Ar+ laser, 815 nm diode laser, monochromators, UV-vis spectrometer, FT-IR spectrometer, and all LabVIEW controlled equipment

- Designed, machined, and constructed experimental apparatuses using optical components, electronics, and high vacuum equipment

- Fabricated ceramic and metallic nanoparticles using wet chemistry methods (distillation, centrifugation, sonication, rotary evaporation, electroless metal plating)

- Deposited thin films using sputtering and e-beam evaporation in a class 100 clean room

- Characterized nanoparticles using UV-vis, SEM, AFM, FT-IR, Raman, NMR, XRD, dark field microscopy, and chromatography

- Modeled nanoparticle electromagnetic and thermal responses using COMSOL on a Xeon-based cluster

- Trained and supervised undergraduates and new graduate students

-

Northrop Grumman Corporation

Associate Engineer

February 2001 – July 2002

- Designed missile state vector prediction algorithms for National Missile Defense infrared satellites

- Triangulated ballistic missile parameters in simulation using least squares estimation

- Wrote software in Perl and Matlab to automate simulation processes

-

Applied Research Labs

Senior Student Associate

January 2000 – December 2000

- Performed experiments to test sonar array performance using underwater objects and multi-directional sonar datasets

- Modeled wideband signal beamformer for a linear sonar array

- Improved sonar images using signal processing techniques

Education

- Ph.D., Applied Physics Rice University 2006 – 2008

- M.S., Applied Physics Rice University 2002 – 2006

- Graduate Certificate, Applied Statistics Portland State University 2016 – 2020

- B.S., Electrical and Computer Engineering University of Texas at Austin 1998 – 2000

Certifications

- Improving Deep Neural Networks: Hyperparameter Tuning, Regularization and Optimization

- Convolutional Neural Networks

- Machine Learning

- Machine Manufacturing Technology

Publications

- Photothermal efficiency of nanoshells and nanorods for clinical therapeutic applications

- Optimized plasmonic nanoparticle distributions for solar spectrum harvesting

- Fluorescence Enhancement by Au Nanostructures: Nanoshells and Nanorods

Applied Research

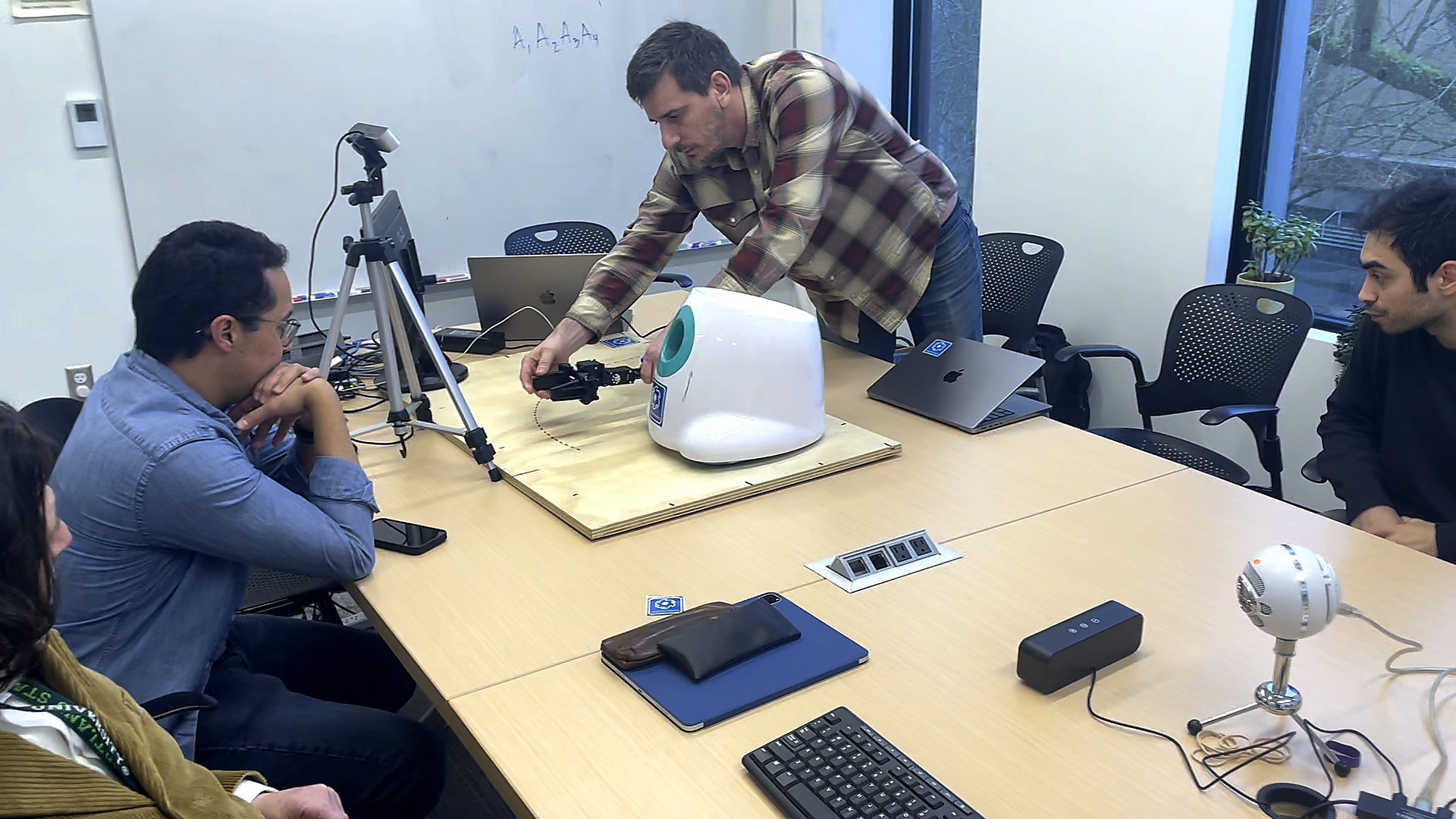

Humanoid manipulation research and partner integrations — building the data collection and systems work that feeds into production.

ShopTalk 2025 — RCR with autonomous retail robots of the future

ShopTalk 2025 — RCR with autonomous retail robots of the future

Unitree G1 humanoid robot — autonomous skill building at Rose City Robotics Machine Vision Lab, Portland State University